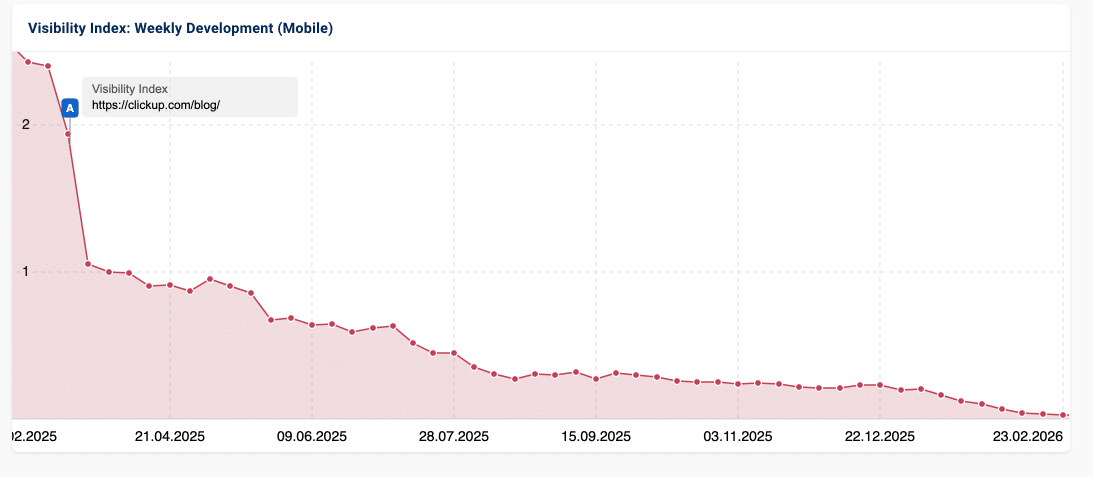

April 2026 has yielded a landmark case study in SEO: The collapse of the ClickUp blog. With a 97.6% drop in organic visibility over 15 months, the industry is scrambling to explain how a DR 90+ authority site could fall off the face of the earth.

Most pundits have reached a verdict: Moral Failure.

They claim ClickUp was “too promotional.” They blame the 15 CTAs per page. They argue that putting ClickUp at the #1 spot of every listicle “offended” Google’s Helpful Content System.

They were and are wrong.

The Engine vs. The Paint Job

When a car breaks down, you don’t blame the driver for being rude. You open the hood.

The “Moral Failure” theory assumes Google is a literary critic. But Google’s Helpful Content System (HCS) is an AI-guided classifier. It doesn’t care about your ego; it cares about its own Compute Tax.

The 70,000-URL Nightmare & The Collision State

ClickUp scaled its blog to over 7,000 posts using a rigid, templated system. Each post featured a Table of Contents (TOC) with dozens of #anchor jump links.

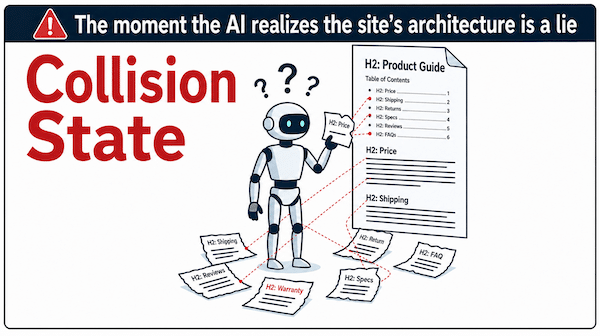

In a traditional search world, these were harmless “features.” In the world of AI Retrieval, they are toxic. To an AI crawler, those jump links behave like unique URLs, breaking the page down into “Mini-Pages.”

When Google attempted to render ClickUp, it found a massive Collision State: the links promised in the TOC didn’t cleanly map to the raw code without heavy Javascript rendering. ClickUp didn’t just have 7,000 posts; their architecture forced Google to process 70,000+ accidental duplicate Mini-Pages.

Google didn’t penalize ClickUp because they were “selfish.”

Google penalized ClickUp because they were expensive.

The HCS tagged the entire domain as an Asymmetrical Shell, a site that looks great to a human but is a fragmented, computational nightmare for an AI.

The Verification Buffer: Why Your TOC-laden content is a Ticking Time Bomb

You might think, “My site uses TOCs and my traffic is fine!”

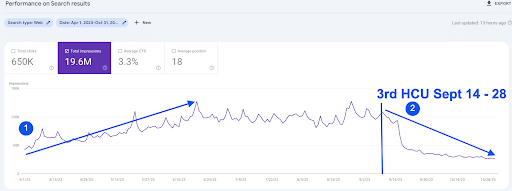

This is the illusion of the Verification Buffer. Standard discovery bots initially ingest your text and inflate your impressions (we call this climbing “AI Mountain”). It appears as a massive increase in impressions overall when viewed in Google Search Console. But weeks or months later, often during a Broad Core Update, Google schedules a high-compute render audit.

When, what we refer to as the VizzEx Symmetry Gate audit begins, the AI finds the Collision State, realizes it cannot afford to process your DOM, and evicts you from the RAG layers.

You fall off AI Mountain instantly.

Example of an affiliate site full of TOC anchor links to illustrate the growth of impressions with no increase in clicks prior to the Sept 2023 Helpful Content Update where it fell off the mountain.

Doubling Down on the Wrong Problem

The most tragic part of the ClickUp data?

2,815 of those posts were added after their traffic decline began in 2024. As their traffic cratered, they just kept pumping the same broken template. They tried to “write” their way out of a “code” problem.

If you are paying an agency to rewrite your blog posts to “sound more human” while your HTML is still generating thousands of Collision States, you are fighting a battle you’ve already lost.

The Solution: Structural Signal Integrity

Success in 2026 requires moving from legacy SEO to Signal Architecture. You must eliminate the Collision State by passing the VizzEx Symmetry Gate, a mathematical parity between your raw code and your rendered layout.

To see the exact mechanics of how Google’s Render Audit targets these vulnerabilities, read my technical breakdown: Read: Hidden Divs and Table of Content Anchor Links: The Dual Killers of AI Visibility

Ready to stop guessing? The VizzEx Logic Engine is the only tool designed to identify and eliminate Collision States before any Verification Buffer expires. Contact us today for a Signal Architecture review.

Frequently Asked Questions

Why did ClickUp lose 97% of its organic search traffic?

Google penalized ClickUp because they were expensive. The HCS tagged the entire domain as an Asymmetrical Shell, a site that looks great to a human but is a fragmented, computational nightmare for an AI. ClickUp didn't just have 7,000 posts; their architecture forced Google to process 70,000+ accidental duplicate Mini-Pages.

How do Table of Contents anchor links hurt your SEO rankings?

To an AI crawler, those jump links behave like unique URLs, breaking the page down into 'Mini-Pages.' When Google attempted to render ClickUp, it found a massive Collision State: the links promised in the TOC didn't cleanly map to the raw code without heavy Javascript rendering.

Why does my traffic look fine now if TOC anchor links are a problem?

This is the illusion of the Verification Buffer. Standard discovery bots initially ingest your text and inflate your impressions. But weeks or months later, often during a Broad Core Update, Google schedules a high-compute render audit. When the VizzEx Symmetry Gate audit begins, the AI finds the Collision State, realizes it cannot afford to process your DOM, and evicts you from the RAG layers.

What is the fix for a Google Helpful Content System penalty caused by site architecture?

Success in 2026 requires moving from legacy SEO to Signal Architecture. You must eliminate the Collision State by passing the VizzEx Symmetry Gate, a mathematical parity between your raw code and your rendered layout.

Will publishing more content help recover traffic lost to a Helpful Content Update?

2,815 of those posts were added after their traffic decline began in 2024. As their traffic cratered, they just kept pumping the same broken template. They tried to 'write' their way out of a 'code' problem. If you are paying an agency to rewrite your blog posts to 'sound more human' while your HTML is still generating thousands of Collision States, you are fighting a battle you've already lost.