How a Table of Contents Feature Triggered the February 2026 SERP Collapse

In early February 2026, the search landscape didn’t just shift; it fractured. Within 72 hours, thousands of high-authority domains witnessed a precipitous collapse in visibility, sparking an industry-wide panic. The post-mortem consensus from the usual pundits was swift: Google had finally dropped the hammer on “scaled AI content.”

But the forensic trail led somewhere else.

The following is a condensed version of the forensic analysis of Carolyn Holzman, as she retraced the same data trails of the original analysis articles to form her own conclusion about listicles and other content forms realizing that this isn’t an AI generated content penalty but something else altogether.

While the industry fixated on an “AI penalty,” the actual data betrays a systemic structural failure — a collision between decades-old HTML navigation and an increasingly aggressive Helpful Content System (HCS) — a failure that points directly toward expertise architecture as the alternative to broken tactics. What makes this collision particularly consequential is how HCS and GEO now share similar content rules — meaning that because the offending structure (table-of-contents and anchor links) is classified as “unhelpful” content – HCS suppresses it (if not removes it from the index entirely) which means that content won’t be available for AI to cite.

Takeaway 1: The Table of Contents Trap

The primary culprit in this visibility bloodbath isn’t the origin of the words, but the “DNA” of the containers holding them. Specifically, the forensic evidence points to the HTML structure of Table of Contents (TOC) modules and jump links. For years, web designers have viewed these as UX best practices, yet for Google’s Helpful Content System, they have become an accidental duplication engine.

The logic is simple but devastating.

The <a> tags and #anchor links used for internal SAME PAGE jumps are misinterpreted by Google’s Helpful Content System as prompts to discover new URLs. When a site uses a TOC with 10 jump links, the system doesn’t see one page; it sees 11 unique paths to the same content. This is counter-intuitive to a designer, but to a bot, every href is a command to expand the crawl queue.

“Each one of these can be treated as a separate URL that leads to the same page of content.”

This is NOT the case with internal links that link two pages on the same domain and is NOT being discussed in this analysis.

Takeaway 2: Even Google is Not Immune to Its Own Algorithm

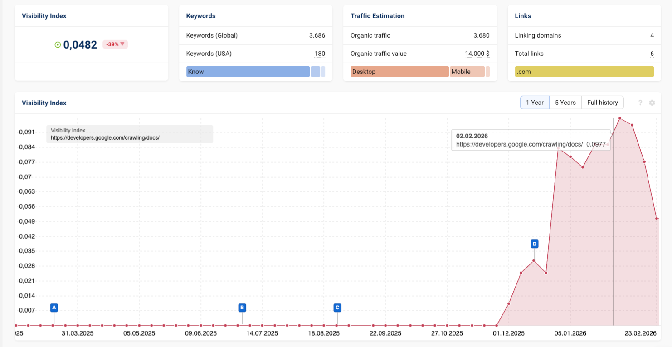

The theory that Google is simply “penalizing AI abuse” falls apart under the weight of a singular irony: Google’s own technical documentation was one of the victims. On February 2, 2026, developers.google.com experienced a “mountain-shaped” visibility drop that perfectly mirrored the crashes seen on sites like Grokipedia.

The timeline is critical. Following the December 2025 Core Update, Google appears to have effectively lowered the “tolerance level” for internal duplication which is tagged as “unhelpful content”. By early February, even the gold-standard Google documentation directory at developers.google.com/crawling/docs/ was apparently flagged.

The Structural Irony: Google’s Own Docs Hit By HC

If the Helpful Content System were truly judging “quality” or “human effort,” Google’s own meticulously written developer docs would be untouchable.

Instead, the system flagged Google’s documation as unhelpful content. If Google itself can’t pass its own “clarity score” due to TOC layouts, the problem isn’t the content—it’s the system’s inability to reconcile the canonical against the TOC jump link URLs as those results are policed by the rules of helpful classification. Understanding how Google classifies your domain is the prerequisite to grasping why structural semantic signals—not content quality—are now the primary lever HCS uses to assign authority.

Takeaway 3: Technical Alert—The “Canonical” is Now Just a Suggestion

The safety net we’ve relied on for years is either broken or ignored. Since the deprecation of the URL Parameter Tool in April 2022, the rel=canonical tag has been demoted from a directive to a mere suggestion.

The Parameter-to-URL Pipeline: How TOC Links Bloat Your Index

Google’s Crawl Team has warned that parameters—including fragment links (#)—create what appear to be entirely new URLs. By adding text to the slug, you are bloating your site’s indexable footprint with identical pages.

The Death of Canonical Enforcement: How HCS Bypasses Canonical Tags

The Helpful Content System (HCS) now appears to bypass canonical instructions when performing its analysis. This was confirmed by the May 2024 Google API Content Warehouse leak, which revealed features like “siteFocus” and “siteRadius.” These metrics indicate that Google has a system that is performing “horizontal content analysis”—judging your entire domain as a collection rather than evaluating pages in isolation. When the HCS identifies hundreds of “parameterized” pages created by TOC links as duplicate content, it reduces site clarity scores, dragging down the authority of the original canonical page. Grasping how this horizontal evaluation works requires understanding semantic content analysis and AI citation patterns—the foundational lens through which both HCS and LLMs assess whether your domain signals coherent topical authority.

Takeaway 4: The “Bloom and Bust” Visibility Cycle

Forensic analysis of sites like Shopify, Elementor, and UpClick reveals a deceptive data pattern that often lures SEOs into a false sense of security before the crash.

- The Bloom: A site sees a sudden surge in impressions. This isn’t because the content is “better”; it’s because the H2 headers and TOC links have expanded the “query footprint,” allowing the site to show up for a massive range of query variations.

- The Bust: Once the Helpful Content Classifier completes its horizontal analysis, it flags the excessive duplication. The system then “condenses” the query footprint.

- The Result: The extra queries are stripped away, and the canonical page is suppressed. For enterprise sites, this looks like a sharp dip; for smaller sites, it can be a catastrophic washout.

Takeaway 5: Why H2 and H3 Tags—Not TOC Links—Drive AI Citations

A major driver for the proliferation of TOC widgets was the belief that they were necessary for citations in LLMs like Gemini or ChatGPT. However, insights from a closed-door Google Creator Summit in Washington, DC (September 2025) revealed that Google itself considers these Table of Content features a “clarity” risk.

The reality is that LLMs don’t require functional jump links; they need semantic structure. AI models cite content based on H2 and H3 header tags because they provide a cost-effective way for the model to “spend tokens.” A clear semantic hierarchy allows an LLM to parse and attribute information without the “crawl waste” of a functional TOC. This is precisely where semantic H2 structure and AI-recognized link relationships converge—the same structural signals that help LLMs parse your content also determine how AI systems attribute authority across your domain.

“AI would cite content with just the H2s alone! The header structures are what makes it easy for LLMs to spend the tokens on your content.”

Survive With Semantic Structure

The February 2026 visibility collapse is a warning shot. We are entering an era where the “DNA of our HTML” is being weaponized against even Google itself by an AI-guided Helpful Content System that no longer is restrained by traditional canonical rules. The industry’s obsession with a “user-friendly” navigation tool for readers has, in the age of Helpful Content classifier, created a false narrative of unhelpful duplicate content that the HCS is now programmed to suppress out of results..

As forensic analysts, the conclusion is unavoidable: your authority cannot protect you from your architecture. To survive, we must strip away the functional “TOC” and return to pure semantic structure. We must ask ourselves: Is a clickable Table of Contents worth the risk of being labeled “unhelpful” by the very systems we are trying to satisfy?

The data suggests the answer is a resounding no. And if you’re already thinking about how to respond, it’s worth understanding why page-level fixes no longer respond immediately—because the same systemic forces that created this collapse make isolated repairs insufficient.